Notes on Animate-A-Story: Storytelling with Retrieval-Augmented Video Generation

This is a summary of an important research paper. It was made interactively by a human and several AI's. The goal is to curate good ideas and save time.

Link to paper: https://arxiv.org/abs/2307.06940

Paper published on: 2023-07-13

Paper's authors: Yingqing He, Menghan Xia, Haoxin Chen, Xiaodong Cun, Yuan Gong, Jinbo Xing, Yong Zhang, Xintao Wang, Chao Weng, Ying Shan, Qifeng Chen

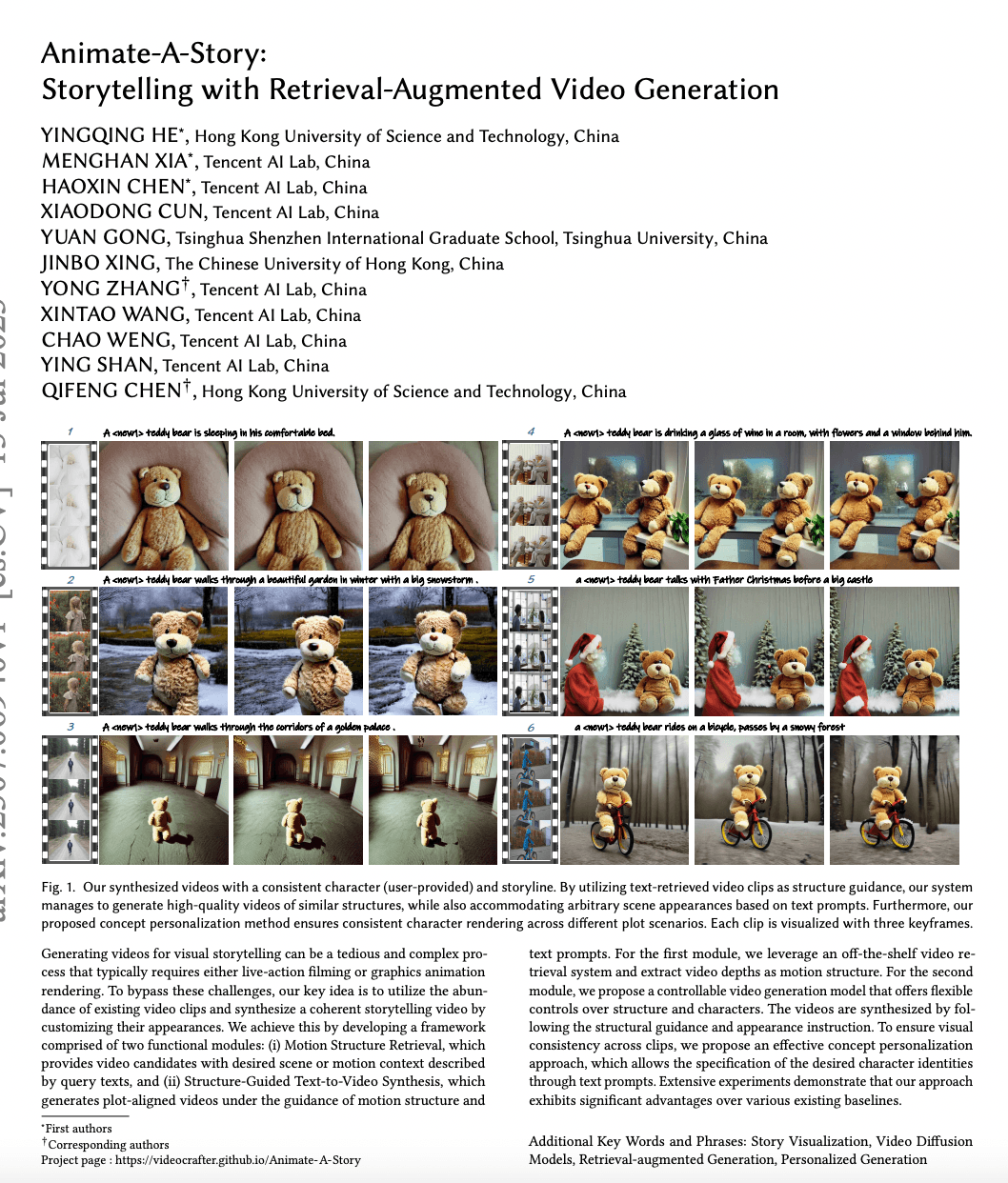

In this research, the authors present a novel approach to the generation of storytelling videos. Imagine a director who wants to create a film, but instead of shooting new footage, they use existing clips and modify them to fit the script. This is essentially what the proposed retrieval-augmented video generation approach does. It uses existing video clips as a guide to generate coherent storytelling videos, offering flexible control over the structure and characters in the videos.

The system is composed of two modules: Motion Structure Retrieval and Structure-Guided Text-to-Video Synthesis. The former is like a casting director, selecting the right clips based on the text prompts and extracting the motion structure from them. The latter is like the film editor, using the retrieved motion structure and text prompts to generate plot-aligned videos.

To ensure visual consistency across different video clips, the researchers propose a concept personalization method. This is akin to a makeup artist ensuring that the characters look the same throughout the film, even though the clips used might be from different sources. This method allows for the specification of desired character identities through text prompts.

The researchers also propose an adjustable structure-guided text-to-video model and a new concept personalization approach, both of which outperform existing competitors. The approach has potential for practical applications in storytelling video synthesis.

Now let's dive into the technical details. The retrieval-augmented text-to-video generation involves text processing, video retrieval, and video synthesis. The structure-guided text-to-video synthesis uses a conditional LDM (latent diffusion model) that is conditioned on both the motion structure and the text prompt.

To generate consistent characters across different video clips, the authors propose a method called TimeInv (timestep-variable textual inversion). This involves learning timestep-dependent token embeddings to represent the semantic features of the target characters.

However, optimizing a single token embedding vector has limited expressive capacity due to limited parameter size. Describing concepts with rich visual features and details in one word is difficult and insufficient. To overcome this, the authors propose timestep-variable textual inversion (TimeInv) to better learn the token depicting the target concept. They designed a timestep-dependent token embedding table to store controlling token embedding at all timesteps.

The authors also used depth signals to control the video generation model and synthesize static concept videos. They added a low-rank weight modulation to capture appearance details of the character. They addressed the conflict between structure guidance and concept generation by making the depth-guidance module adjustable.

The effectiveness of these design choices was validated through ablation experiments. The proposed approach achieved better performance in video synthesis compared to existing models and outperformed previous approaches in terms of semantic alignment and concept fidelity for personalization.

The proposed method can be used for both image and video generation tasks. It shows better background diversity and concept composition in image personalization. The method can serve as a replacement for Textual Inversion and can be combined with custom diffusion for better performance.

The research suggests potential directions for future improvement, such as a general character control mechanism and a better cooperation strategy between character control and structure control. This research opens up new possibilities for content creators, providing a more efficient and accessible way to produce high-quality animated videos.

In conclusion, the proposed framework provides a novel and efficient approach for automatically generating storytelling videos based on storyline scripts or with minimal interactive effort. The key technical designs include motion structure retrieval, structure-guided text-to-video synthesis, and video character rerendering, which together enhance the performance of video generation. The research has numerous practical applications and sets the stage for future advancements in the field of storytelling video synthesis.