Notes on AnimateDiff: Animate Your Personalized Text-to-Image Diffusion Models without Specific Tuning

This is a summary of an important research paper that provides a 12:1 time savings. It was made interactively by a human and several AI's. The goal is to save time and curate good ideas.

Link to paper: https://arxiv.org/abs/2307.04725

Paper published on: 2023-07-10

Paper's authors: Yuwei Guo, Ceyuan Yang, Anyi Rao, Yaohui Wang, Yu Qiao, Dahua Lin, Bo Dai

GPT3 API Cost: $0.02

GPT4 API Cost: $0.08

Total Cost To Write This: $0.1

Time Savings: 12:1

Understanding AnimateDiff: A Framework for Animating Personalized Text-to-Image Models

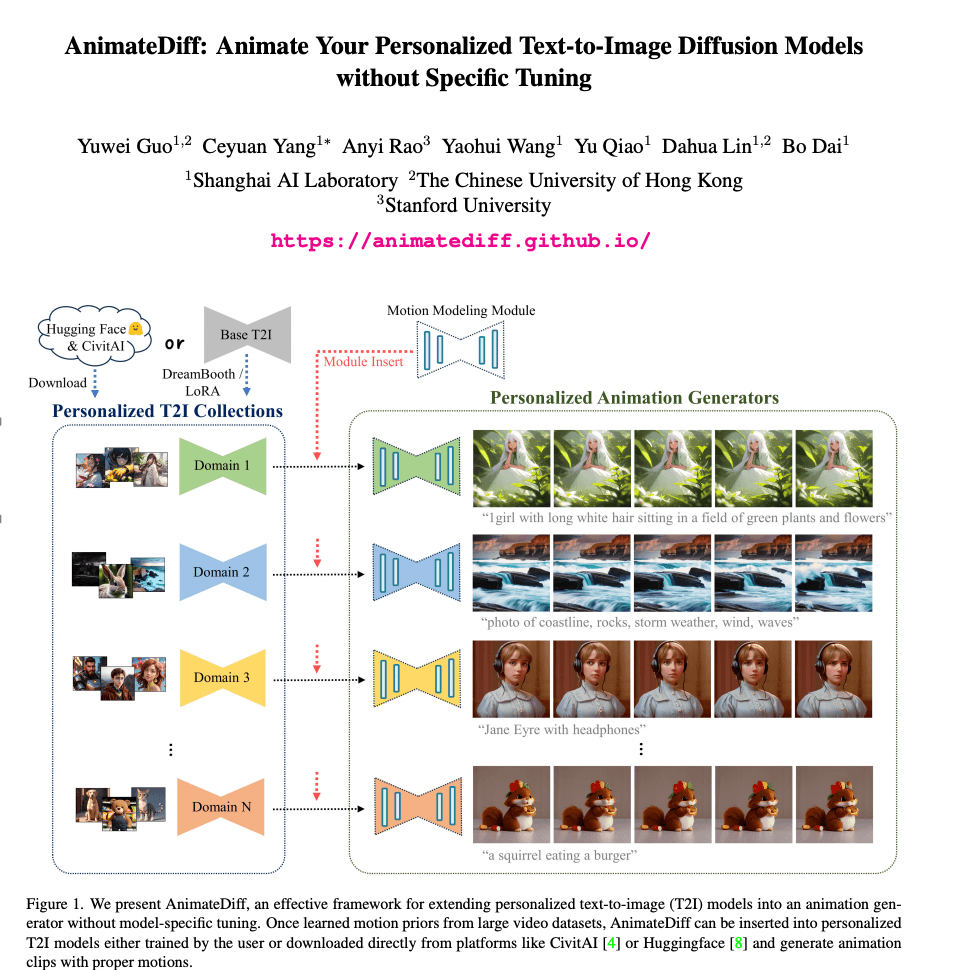

In the realm of AI research, a recent paper introduces a novel framework known as AnimateDiff. This framework is designed to animate personalized text-to-image (T2I) models without the need for specific tuning. This innovation is quite remarkable as it allows for the generation of animated images with proper motions, all while preserving the domain and diversity of the outputs.

The AnimateDiff Framework

AnimateDiff is designed to insert a motion modeling module into a frozen T2I model. This module is trained on video clips to learn motion priors. Once trained, this module can be inserted into personalized T2I models, thus enabling them to generate animated images with appropriate motions.

Here's the exciting part: this method does not require model-specific tuning or additional data collection. This makes it a versatile and efficient tool for animating personalized T2I models across diverse domains without compromising their domain knowledge.

Personalized Image Generation

The research paper delves into personalized image generation. This involves fine-tuning a pre-trained T2I model on images of a specific domain. Two models are highlighted in this context: DreamBooth and LoRA.

DreamBooth uses a rare string as an indicator to represent the target domain. It generates regularization images to associate this rare string with the expected domain during the fine-tuning process.

LoRA, on the other hand, fine-tunes the model weights' residual and decomposes ∆W into two low-rank matrices. This approach helps reduce both overfitting and computational costs.

Personalized Animation

The process of personalized animation involves training a motion modeling module on video datasets and inserting it into a personalized T2I model at inference time. This motion modeling module is a vanilla temporal transformer that captures temporal dependencies between features.

This motion modeling module is trained using a diffusion schedule and the training objective includes an L2 loss term. This training method aids in creating animations with vividness and realism.

The Magic of the Motion Modeling Module

The motion modeling module in AnimateDiff is a significant component of the framework. It can generate natural and proper motions in animated images. Trained on base text-to-image models, this module can be inserted into other personalized models.

The module learns motion priors from large video datasets and maintains temporal smoothness through efficient temporal attention. This results in the generation of temporally smooth animation clips.

The Power of Transferable Visual Models

The research paper also focuses on learning transferable visual models from natural language supervision. It explores the limits of transfer learning with a unified text-to-text transformer and proposes hierarchical text-conditional image generation with clip latents.

High-resolution image synthesis with latent diffusion models is presented, with the U-net model being discussed as a convolutional network for biomedical image segmentation. Photorealistic text-to-image diffusion models with deep language understanding are developed.

Introducing the Laion-5b Dataset

The paper introduces the Laion-5b dataset, an open large-scale dataset for training next-generation image-text models. This dataset paves the way for personalized text-to-image generation without test-time fine-tuning, as demonstrated by the Instant-booth model.

Video Generation Models

The Make-a-video model enables text-to-video generation without text-video data, while the Tune-a-video model allows for one-shot tuning of image diffusion models for text-to-video generation. The Magicvideo model achieves efficient video generation with latent diffusion models.

Conditional Control and Representation Learning

The paper also delves into conditional control added to text-to-image diffusion models and discusses neural discrete representation learning as a method for representation learning. The Attention is All You Need model is presented as a method for attention-based sequence-to-sequence learning.

Conclusion

The AnimateDiff framework and its motion modeling module present a significant advancement in the field of AI research. They enable the animation of personalized T2I models without specific tuning, thus offering a new avenue for creating animated images with proper motions. The research also explores transfer learning, text-conditional image generation, and representation learning, providing a comprehensive understanding of the current state of AI research.