Notes on Augmenting CLIP with Improved Visio-Linguistic Reasoning

This is a summary of an important research paper that provides a 12:1 time savings. It was crafted by humans working with several AI's. The goal is to save time and curate good ideas.

Link to paper: https://arxiv.org/abs/2307.09233

Paper published on: 2023-07-18

Paper's authors: Samyadeep Basu, Maziar Sanjabi, Daniela Massiceti, Shell Xu Hu, Soheil Feizi

GPT3 API Cost: $0.03

GPT4 API Cost: $0.11

Total Cost To Write This: $0.13

Time Savings: 12:1

The ELI5 TLDR:

This research is about improving a model called CLIP, which is used to understand images and text. CLIP is good at understanding individual words but struggles with complex sentences. The researchers propose a new method called SDS-CLIP to enhance CLIP's understanding of sentences by using knowledge from other models. They use a score called denoising diffusion score to help CLIP understand the relationships and attributes of objects in images better. This method has shown improvements in CLIP's performance on tasks like understanding relationships between objects in images. It doesn't affect CLIP's ability to classify images without prior training. However, there are challenges in implementing this method, and future research could focus on overcoming these challenges. Overall, this research opens up new possibilities for improving AI's understanding of images and text.

The Deeper Dive:

Introduction and Summary

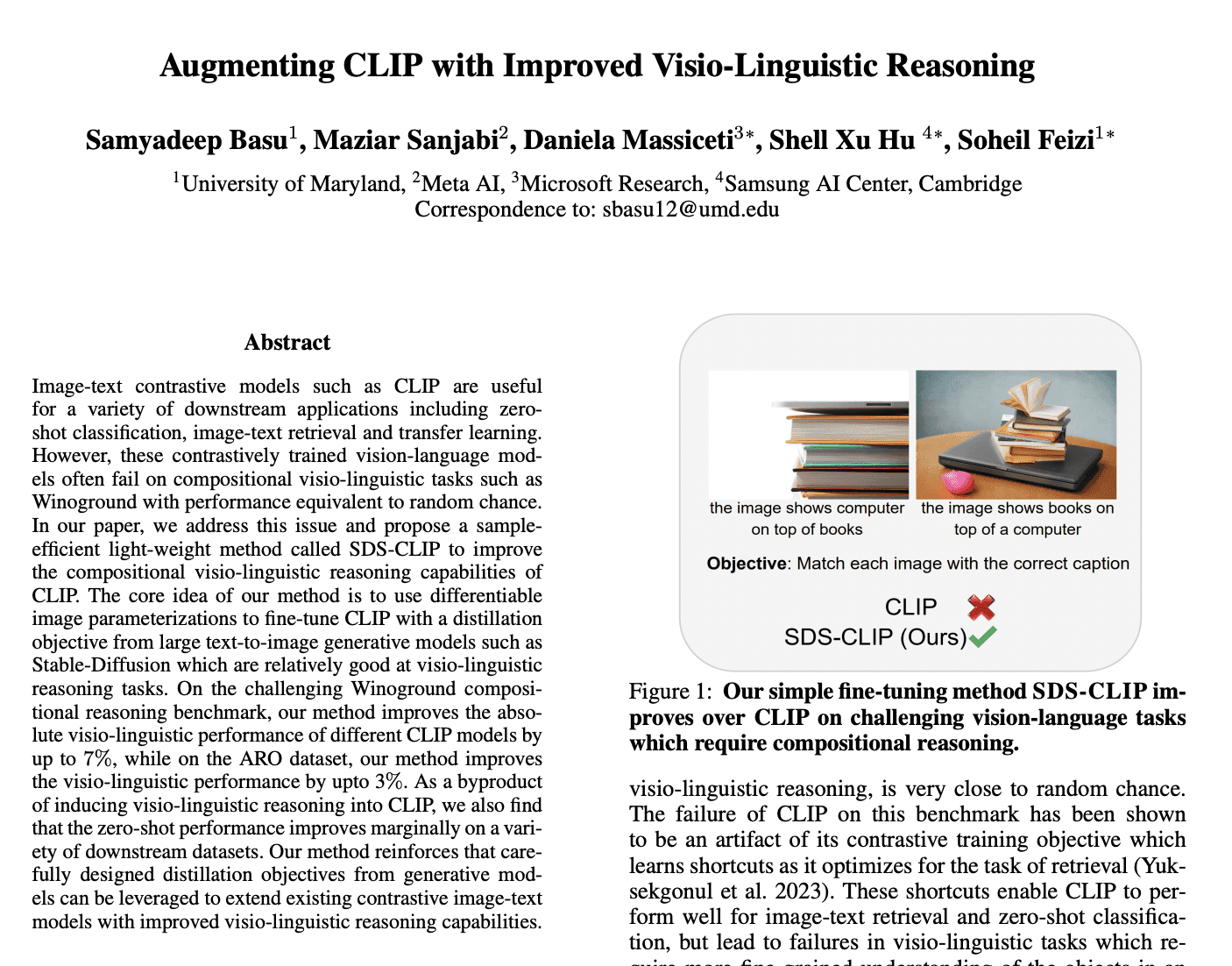

Let's delve into some recent advancements in artificial intelligence, specifically in the realm of visio-linguistic reasoning. The research paper we're going to dissect today introduces a new method to improve the performance of CLIP, an image-text contrastive model. This is achieved through a fine-tuning method called SDS-CLIP, which incorporates knowledge from text-to-image generative models.

The main novelty of this research lies in its approach to enhance CLIP's visio-linguistic reasoning abilities, which have been found to underperform in tasks such as Winoground. By distilling knowledge from text-to-image generative models, CLIP's performance can be improved, even marginally enhancing its zero-shot classification capabilities.

To illustrate, think of CLIP as a translator who is good at understanding individual words but struggles with complex sentences. The new method, SDS-CLIP, is like a tutor who helps the translator understand the deeper meaning and context of sentences, enhancing their overall translation skills.

Understanding CLIP and its Limitations

CLIP, or Contrastive Language–Image Pretraining, is a model that aligns image and text embeddings in a shared space. It does this through a contrastive objective, which matches the embeddings of related image-text pairs and separates those of unrelated pairs. This makes it useful for tasks such as zero-shot classification, image-text retrieval, and transfer learning.

However, CLIP has shown limitations in handling compositional visio-linguistic tasks. This is primarily due to its training objective, which tends to learn shortcuts, leading to failures in complex tasks such as Winoground. Winoground is a benchmark that measures a model's ability to understand the relationships between objects and their attributes in an image, given a textual description.

The Introduction of SDS-CLIP

To address these limitations, the researchers propose SDS-CLIP, a fine-tuning method that incorporates a distillation objective from text-to-image generative models, specifically Stable Diffusion. This model has shown better visio-linguistic reasoning abilities than CLIP.

SDS-CLIP uses what's called differentiable image parameterizations. These are representations of images that can be manipulated through gradient descent, which is a popular optimization algorithm. By incorporating these parameterizations, SDS-CLIP can adjust CLIP's understanding of images to align more closely with the understanding provided by the text-to-image generative model.

The Role of Denoising Diffusion Score

A key component of SDS-CLIP is the denoising diffusion score from Stable Diffusion. This score is used to match images in tasks and has demonstrated superior performance over CLIP on relational and attribute-binding tasks. It essentially acts as a guide, helping CLIP understand the relationships and attributes of objects in images better.

This score is incorporated into CLIP's contrastive objective through a distillation loss called LSDS, which encourages image-text binding. This process of distillation involves freezing internal representations from the UNet (a type of neural network used in Stable Diffusion) and using them to regularize features of the image encoder in CLIP.

The Impact of SDS-CLIP on Performance

The application of SDS-CLIP has shown significant improvements in CLIP's performance on the Winoground benchmark, especially in object-swap and relational understanding sub-categories. It has also improved performance on attribute-binding and relational understanding tasks in the ARO dataset. However, it does not help on tasks where the order of the text is perturbed.

Interestingly, the zero-shot performance of CLIP is not negatively affected by the fine-tuning with SDS-CLIP and may even improve in certain cases. This suggests that the addition of visio-linguistic reasoning abilities does not compromise the model's existing capabilities.

Practical Considerations and Future Directions

The fine-tuning process with SDS-CLIP does have its challenges. For one, it requires backpropagation through the entire UNet, which has a large number of parameters. This necessitates a decrease in batch size during fine-tuning, which could limit the scalability of this approach.

Future research could focus on mitigating these limitations, such as designing distillation methods that don't require backpropagation through the UNet. This would enable larger batch sizes and potentially further improve the results. Additionally, understanding and addressing the deficiencies of text-to-image models on ordering tasks could also be a fruitful area of exploration.

Conclusion

In summary, this research presents a promising approach to improve the visio-linguistic reasoning abilities of CLIP, a widely-used model in AI. By distilling knowledge from text-to-image generative models using a method called SDS-CLIP, we can potentially achieve better performance in complex tasks, all without sacrificing the model's existing capabilities. While there are practical challenges to consider, this research opens up exciting new possibilities for the application of AI in image and text understanding tasks.