Notes on DIALGEN: Collaborative Human-LM Generated Dialogues for Improved Understanding of Human-Human Conversations

This is a summary of an important research paper that provides a 24:1 time savings. It was crafted by humans working with several AI's. The goal is to save time and curate good ideas.

Link to paper: https://arxiv.org/abs/2307.07047

Paper published on: 2023-07-13

Paper's authors: Bo-Ru Lu, Nikita Haduong, Chia-Hsuan Lee, Zeqiu Wu, Hao Cheng, Paul Koester, Jean Utke, Tao Yu, Noah A. Smith, Mari Ostendorf

GPT3 API Cost: $0.06

GPT4 API Cost: $0.18

Total Cost To Write This: $0.24

Time Savings: 24:1

The ELI5 TLDR:

DIALGEN is a special computer program that helps people have conversations. It uses a language model called ChatGPT to make the conversations sound natural. It can be really helpful for getting information from customers in a structured way, like when a customer service agent needs to ask about a car accident. DIALGEN can also keep track of all the important information in the conversation. The researchers made a special dataset called DIALGEN-AIC to train the program. They also found that using some fake data along with real data can make the program work even better. But we have to be careful because sometimes the program might say things that are not nice or invade people's privacy. So we need to make sure it is safe to use.

The Deeper Dive:

DIALGEN: A Human-in-the-Loop Semi-Automated Dialogue Generation Framework

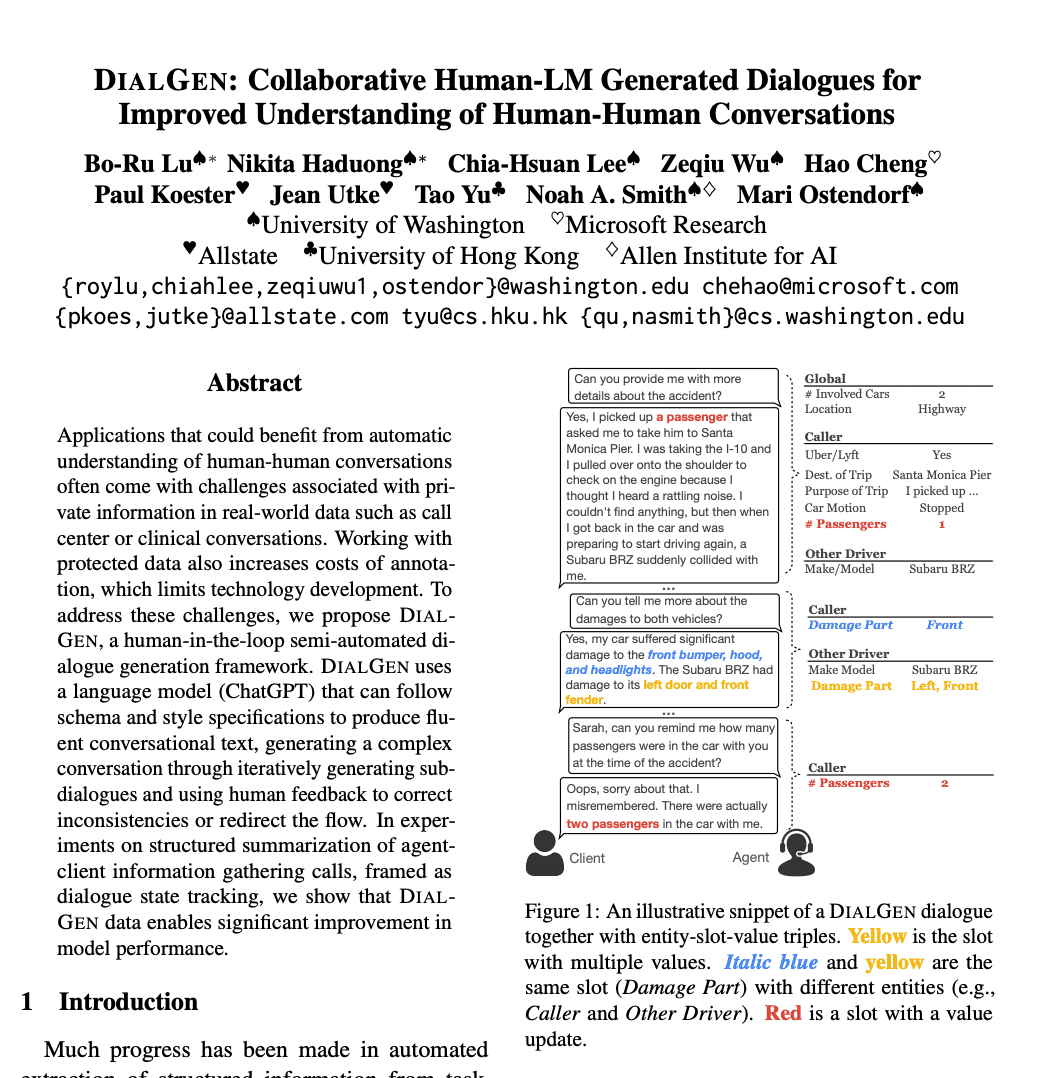

The research paper presents DIALGEN, a semi-automated dialogue generation framework that leverages a language model (in this case, ChatGPT) to generate fluent conversational text. The framework is designed to generate complex conversations by iteratively creating sub-dialogues and using human feedback to correct inconsistencies or redirect the flow of the conversation.

DIALGEN is particularly useful in structured summarization of agent-client information gathering calls. The paper shows that the data generated by DIALGEN can significantly improve model performance. To illustrate, imagine a customer service call in an auto insurance company where the agent needs to gather information from a client about a recent car accident. DIALGEN can automate this process, generating a structured dialogue that efficiently collects necessary information while ensuring the conversation remains coherent and on-topic.

Dialogue State Tracking (DST) in Problem-Solving Setting

The paper also proposes a reframing of dialogue state tracking (DST) to accommodate a problem-solving setting. DST, traditionally, is the task of keeping track of the state of a conversation, understanding what has been said, and what information has been exchanged. In a problem-solving setting, this involves linking information with different entities and tracking multiple values in a single slot.

For instance, in the context of an auto insurance claim call, the DST needs to track the various entities involved (the caller, the other driver, any witnesses, etc.), and the multiple pieces of information associated with each (contact details, statements, etc.). This is a more complex task than traditional DST and requires a new approach.

Entity-Centric DST Scoring Methodology

To accommodate this complexity, the researchers propose a new entity-centric DST scoring methodology. This approach is more suitable for problem-solving settings as it can better handle the intricacy of linking information with different entities and tracking multiple values in a single slot.

DIALGEN-AIC: A Custom Dialogue Dataset

The researchers also introduce DIALGEN-AIC, a custom dialogue dataset that exemplifies the complexity of real-world auto insurance call center data. This dataset includes dialogues with multiple entities and multiple values for a single slot, offering a rich resource for training and testing models in this domain.

DIALGEN Framework Components

The DIALGEN framework includes a prompt for dialogue generation, which is comprised of a task description, entity-slot-value triplets, story, personality, and dialogue history. The language model generates sub-dialogues based on the prompt and the dialogue history, and human reviewers validate, edit, and annotate the generated sub-dialogues. This process continues iteratively until the dialogue is complete.

Dialogue Generation and DST in Auto Insurance Claim Calls

The paper focuses on dialogue generation and DST in the context of auto insurance claim calls. The dialogue generation process involves iterative subdialogue generation, human-in-the-loop review, and annotation of information tuples. DST is used to track the dialogue state and extract structured information from the user's responses.

The DST problem is reframed to handle complex tasks, including the introduction of referents, multiple values for slots, and different ways of updating slot values. Evaluation of DST model performance is done using metrics such as joint goal accuracy (JGA), slot accuracy, precision, recall, and F1 score.

DIALGEN with ChatGPT5: Creating DIALGEN-AIC

The researchers applied DIALGEN with ChatGPT5 as the language model backbone to create DIALGEN-AIC, which contains 235 labeled dialogues. Reviewers were recruited from university listings and compensated at a rate of $18.69 per hour. They underwent training and practiced generating dialogues.

The reviewers aimed to generate dialogues with approximately 50 turns. Each dialogue averaged 8±4 subdialogues. Data collection occurred over 2 months with multiple iterations to improve documentation and task instructions.

Inter-Annotator Agreement (IAA)

Inter-annotator agreement (IAA) was calculated at the turn level with three annotators and 32 dialogues, resulting in an IAA of 78.5% F1. This metric measures the degree of agreement among annotators, providing an indication of the reliability of the annotations.

DIALGEN-AIC and AIC: Comparisons and Uses

DIALGEN-AIC has less variance than AIC (another dataset) across all statistics and is more complex than MultiWOZ. It was split into train/val./test sets with a ratio of 80/10/10 dialogues. Experiments were conducted to measure the effect of adding DIALGEN-AIC data on model performance. Different models and configurations were used, including in-context learning and finetuned transformers.

Synthetic Data and DST

The paper demonstrates that synthetic data can be valuable in combination with real data for improving model performance in DST. Using approximately 2.7K turns of synthetic data yields results similar to using 1.3K turns of real data in DST. The synthetic data is more valuable when combined with real data, and the benefit beyond 50% of the data is minimal.

Limitations and Ethical Considerations

The effectiveness of DIALGEN depends on the language model used and the context input length. Ethical considerations include privacy concerns and the potential for harmful content generation by LMs. Safety features may be necessary when employing humans to collaborate with LMs.