Notes on Empowering Cross-lingual Behavioral Testing of NLP Models with Typological Features

This is a summary of an important research paper that provides a 27:1 time savings. It was made interactively by a human and several AI's. The goal is to save time and curate good ideas.

Link to paper: https://arxiv.org/abs/2307.05454

Paper published on: 2023-07-11

Paper's authors: Ester Hlavnova, Sebastian Ruder

GPT3 API Cost: $0.05

GPT4 API Cost: $0.11

Total Cost To Write This: $0.16

Time Savings: 27:1

Summary: Introducing the M2C Framework

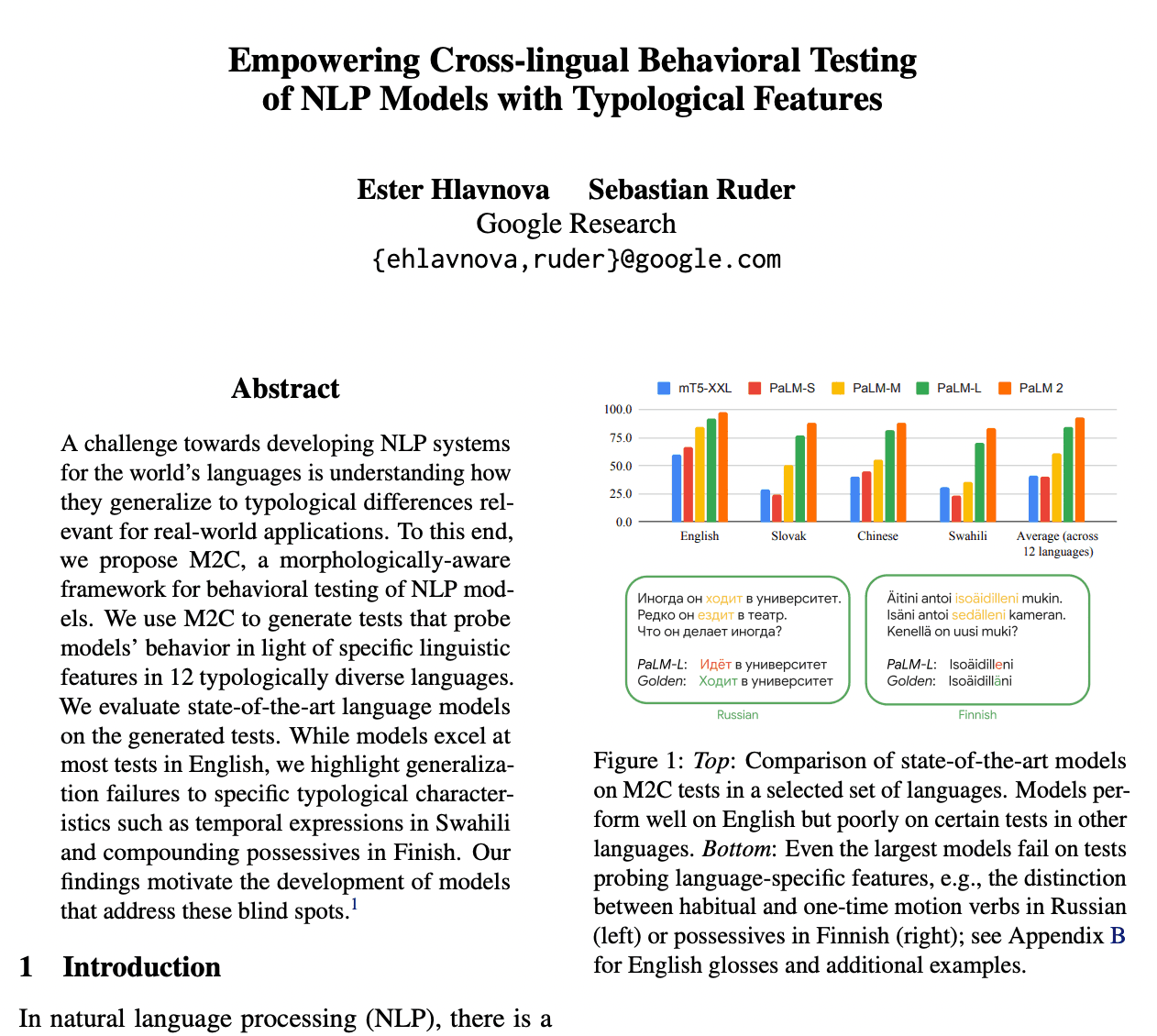

This paper introduces the M2C (Multilingual Multimodal Curriculum) framework, a novel tool for testing the behavior of Natural Language Processing (NLP) models. What makes this framework unique is its morphological awareness. In other words, it takes into account the specific linguistic features of different languages when generating tests.

Imagine you're creating a language model that needs to understand and generate responses in 12 diverse languages. Your model may be excellent at understanding English, but does it understand how to handle temporal expressions in Swahili or compounding possessives in Finnish? This is where the M2C framework comes into play.

The M2C Framework: A Detailed Look

The M2C framework allows for the generation of tests that probe models' behavior in light of specific linguistic features in 12 typologically diverse languages. This includes reasoning capabilities and linguistic features such as negation, numerals, spatial and temporal expressions, and language-specific features. The framework uses templates with placeholders for morphological features to generate these test cases, and models are evaluated on these generated tests in a prompting setting.

The framework follows the UniMorph Schema to describe morphological categories and dimensions. For those unfamiliar with the term, the UniMorph Schema is a universal schema for morphological annotation, which is used to describe the morphological properties of words in any language. In cases where existing dimensions are not sufficient to describe the morphology of placeholders within the templates, language-specific dimensions are introduced.

Advanced Templating and Morphological Integration

The M2C framework includes an advanced templating system with a rich syntax that allows for the description of dependence rules and the generation of diverse output values. This integration of morphology into a broader range of tests is a significant advancement over existing approaches, making the framework more scalable and flexible.

The framework also integrates UnimorphInflect, a tool for generating inflections in multiple languages. While manual inspection is still required for correctness, this integration enables the generation of high-quality tests at scale in multiple languages.

Evaluation and Validation

The M2C framework also includes functionality for answer validation, allowing for the evaluation of generative models using arbitrary templates. This feature is particularly useful for assessing the performance of models on multilingual instruction following and few-shot learning tasks.

M2C: A Middle Ground

The M2C framework occupies a middle ground between libraries that require encoding language-specific rules and generative language models that are highly scalable but less reliable. This balance makes it an invaluable tool for testing the capabilities and typological features of language models across 12 diverse languages.

The Impact of the M2C Framework

The introduction of the M2C framework has important implications for the development and evaluation of NLP models. The framework's ability to generate tests that incorporate morphology and probe language-specific features enables a fine-grained evaluation across diverse languages. This capability is particularly important given the results of the paper's experiments, which showed that even state-of-the-art large language models struggle with certain typological features.

The M2C framework also highlights the need for further research and improvement in multilingual understanding and generation capabilities. By identifying and quantifying morphological errors in model predictions, the framework reveals specific challenges in generating morphologically correct forms.

Conclusion: The Future of NLP Models

This research highlights the need for NLP models that can address blind spots and generalize to typological differences in multilingual scenarios. As we continue to develop and refine these models, tools like the M2C framework will be crucial for identifying areas of weakness and guiding improvements.

In the future, we can expect to see NLP models that are not only more capable of understanding and generating language in a variety of languages, but also more adept at handling the specific linguistic features of those languages. With the help of the M2C framework, we are one step closer to that future.