Notes on Generating Benchmarks for Factuality Evaluation of Language Models

This is a summary of an important research paper. It was made interactively by a human and several AI's. The goal is to curate good ideas and provide a 10:1 time savings.

Link to paper: https://arxiv.org/abs/2307.06908

Paper published on: 2023-07-13

Paper's authors: Dor Muhlgay, Ori Ram, Inbal Magar, Yoav Levine, Nir Ratner, Yonatan Belinkov, Omri Abend, Kevin Leyton-Brown, Amnon Shashua, Yoav Shoham

Let's dive into the essence of the paper which introduces FACTOR, a scalable approach for evaluating the factuality of language models (LMs). It's like having a fact-checker for your language model. FACTOR, like a diligent detective, automatically transforms a factual corpus into a benchmark to evaluate an LM's tendency to generate true facts. The paper presents two benchmarks created using FACTOR: Wiki-FACTOR and News-FACTOR, akin to two different crime scenes where our detective checks for factual accuracy.

The paper also introduces the concept of a contrastive evaluation method. This is like a medical doctor diagnosing a patient by comparing their vital signs to a healthy individual's vital signs. FACTOR perturbs factual statements to create non-factual alternatives, and then evaluates the LM's ability to distinguish between the two.

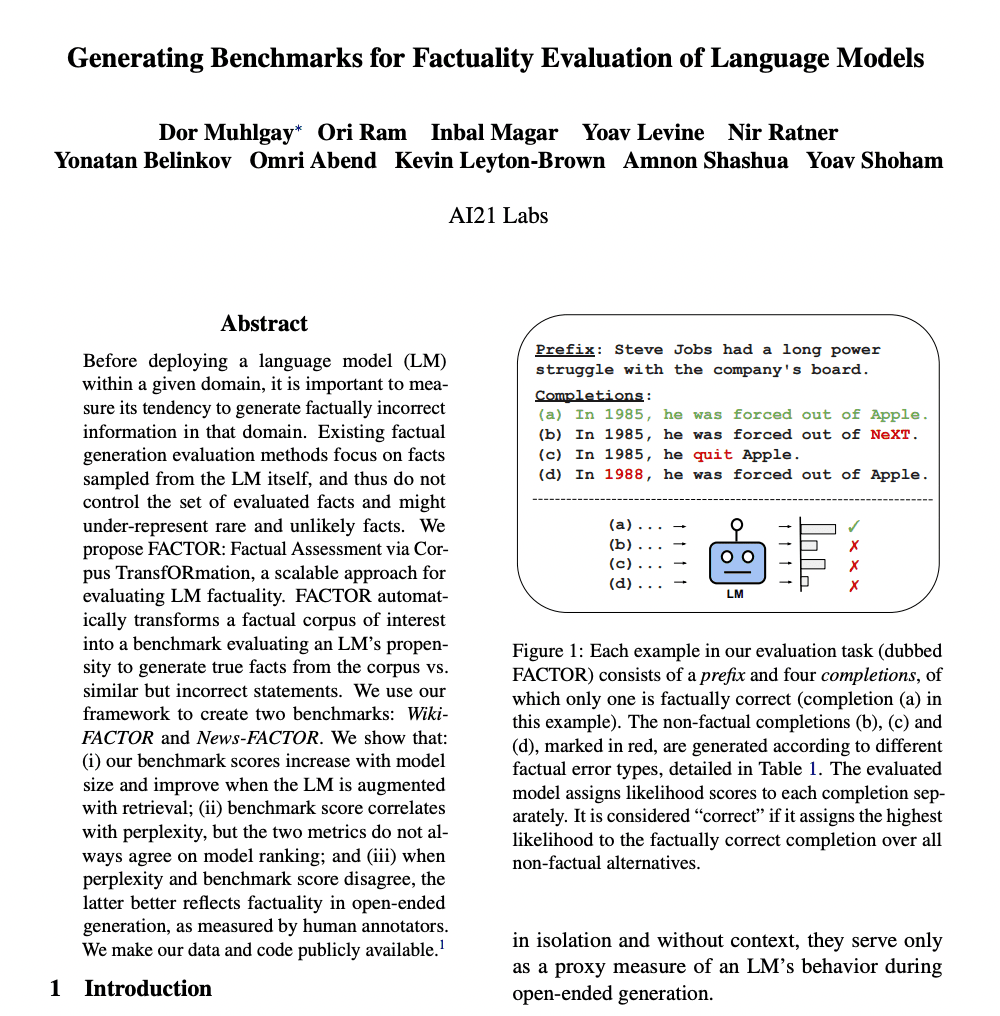

The FACTOR evaluation task consists of a prefix text and four completions, of which only one is factually correct. Think of it as a multiple-choice quiz where only one answer is correct. The evaluated model assigns likelihood scores to each completion separately and is considered correct if it assigns the highest likelihood to the factually correct completion. The FACTOR accuracy, then, is the percentage of examples for which the model assigns higher likelihood to the factual completion than to any of the non-factual alternatives.

The paper also introduces a new concept: a factual error typology. This is a classification system for the types of factual errors that can occur in a text. The error types include predicate errors, entity errors, circumstance errors, coreference errors, and link errors. The dataset creation process involves selecting a prefix and factual completion, generating non-factual completions using these error types, filtering out non-contradictory and non-fluent completions, and selecting the final non-factual completions. The non-factual completions are required to be non-factual, fluent, and similar to the factual completion.

The paper provides experimental findings to show the effectiveness of FACTOR in evaluating LM factuality. It's like a series of lab tests to prove the effectiveness of a new drug. The experiments involve various language models, including GPT-2, GPT-Neo, and OPT models. The research also explores the use of an external corpus for retrieval augmented models, which is like using an external reference book to help answer a quiz.

The paper also compares FACTOR to perplexity as an evaluation metric. While there is a correlation between perplexity and FACTOR accuracy, they can differ in model ranking. In cases where perplexity and benchmark score disagree, the benchmark score better reflects factuality in open-ended generation. This is akin to two judges in a talent show having a different opinion on the ranking of the contestants.

The paper also introduces the concept of FACTOR accuracy, a measure of factuality improvement for retrieval-augmented LM methods. FACTOR accuracy increases with LM size but also depends on the training data. Retrieval augmentation of LM improves FACTOR accuracy.

The paper also presents the FACTOR score, which correlates with the LM's propensity to generate factual information. FACTOR evaluation is cheaper than methods that directly evaluate LM generated text. This is akin to having a cost-effective diagnostic test that can predict a disease based on certain biomarkers rather than conducting an expensive full-body scan.

The paper also highlights the scalability and effectiveness of FACTOR for assessing an LM's propensity for generating factual text. This is like having a scalable diagnostic tool that can be used to assess a large population's health status effectively.

In conclusion, the FACTOR approach provides a scalable, cost-effective, and accurate method for evaluating the factuality of language models. It provides a benchmark for assessing LM's propensity to generate factual text and offers valuable insights into the performance of different LMs and their factual accuracy. It's like having a reliable, efficient, and scalable fact-checker for your language models.