Notes on Giving Robots a Hand: Learning Generalizable Manipulation with Eye-in-Hand Human Video Demonstrations

This is a summary of an important research paper. It was made interactively by a human and several AI's. The goal is to curate good ideas and provide a 10:1 time savings.

Link to paper: https://arxiv.org/abs/2307.05959

Paper published on: 2023-07-12

Paper's authors: Moo Jin Kim, Jiajun Wu, Chelsea Finn

GPT3 API Cost: $0.65

GPT4 API Cost: $0.15

Total Cost To Write This: $0.8

Time Savings: 14:1

Imagine you're a skilled chef, and you're trying to teach a novice how to cook a complex dish. You demonstrate the process, but the novice struggles to replicate it because they lack your years of experience and nuanced understanding of the techniques. Now imagine if there was a way to bridge this gap, allowing the novice to learn from your expertise more effectively. This is the idea behind the research from Stanford University's Department of Computer Science. They have developed a method to train robots using eye-in-hand human video demonstrations, enhancing the robot's ability to generalize tasks by supplementing its training with a diverse range of human demonstrations. This is akin to the novice chef learning from a broad selection of cooking videos from various experts, rather than just one.

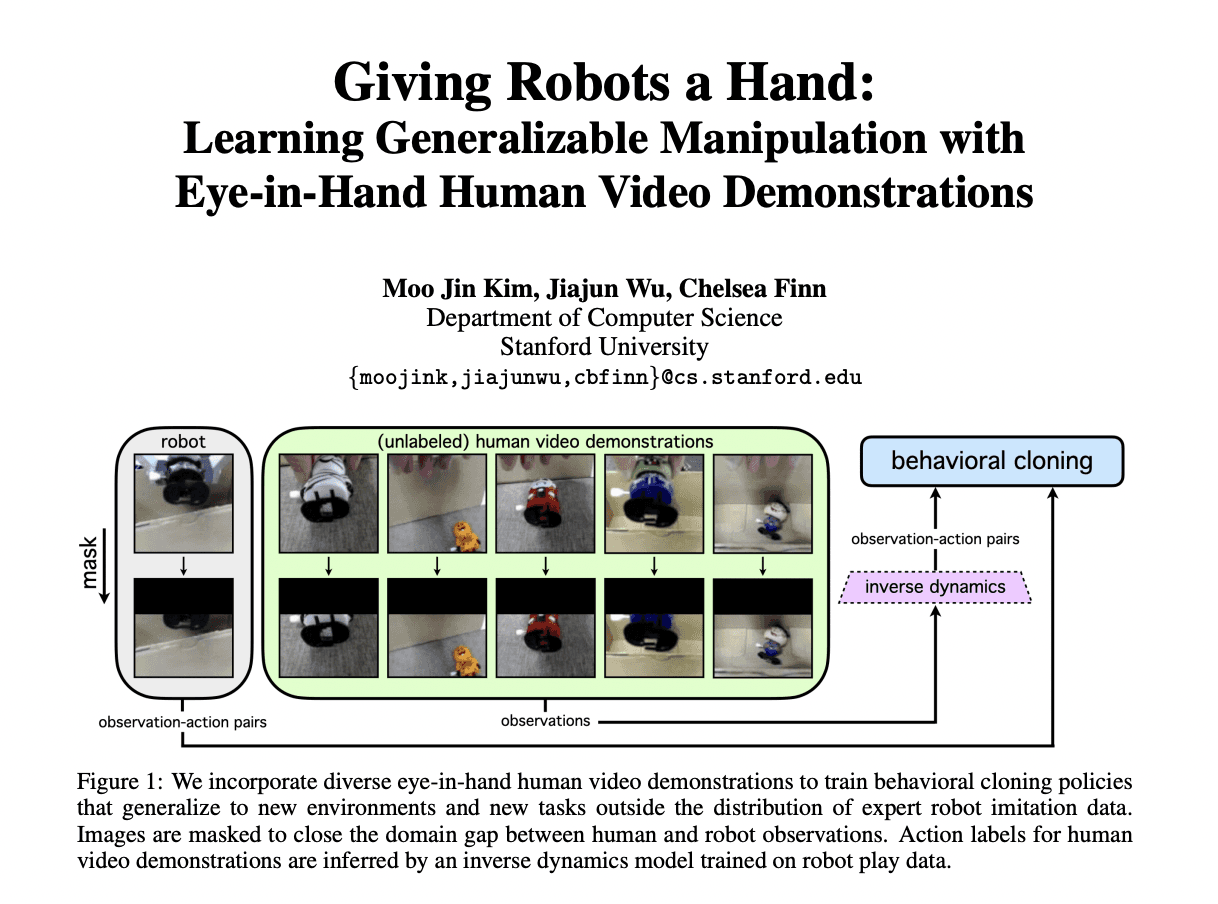

The researchers used a simple fixed image masking scheme to close the visual domain gap between human and robot data, eliminating the need for explicit domain adaptation. This could be compared to providing the novice chef with a detailed recipe that includes precise measurements and cooking times, removing the need for them to guess or adapt the recipe based on their limited experience.

The research involved collecting play data from a robot to train an inverse dynamics model. This model was optimized to minimize the difference between predicted and actual actions for transitions sampled from the play dataset. Think of this as the novice chef practicing a cooking technique, then comparing their results to the expert's, and adjusting their technique based on the differences.

The trained inverse model was then used to label human video demonstrations. This is akin to the novice chef watching a cooking video and noting down each step, ingredient, and technique used by the expert chef. This labeled set of human observation-action pairs was then used to train an imitation learning policy via behavioral cloning. This process is similar to the novice chef practicing the techniques they noted down, trying to imitate the expert chef's actions as closely as possible.

The researchers also modified the behavioral cloning policy to be conditioned on an additional binary variable representing the grasp state at time t (open/closed). This is like the novice chef taking into account whether their hand is open or closed when performing a certain action, such as stirring or chopping.

The researchers tested their method on a suite of eight real-world tasks involving both 3-DoF and 6-DoF robot arm control. The tasks included stacking a red cube on a blue cube, picking-and-placing a red cube onto a green plate, clearing a green sponge from a plate, and packing a small black suit vampire wind-up toy into a box. This is similar to the novice chef being tasked with preparing a variety of dishes, each requiring different cooking techniques and ingredients.

The researchers collected robot demonstrations, human demonstrations, and shared robot play data for the experiments. The results showed that training the policy on the eye-in-hand human video demonstrations with image masking significantly improves task generalization compared to using robot data alone. This is akin to the novice chef improving their cooking skills more effectively by learning from a variety of expert chefs, rather than just practicing on their own.

In some cases, the policy trained on human demonstrations translated to the robot domain via CycleGAN performed worse than simply using the proposed image masking scheme due to errors in image translation. This is similar to the novice chef struggling to replicate a cooking technique they learned from a video because the video was poorly translated into their native language.

The research concluded that both image masking and grasp state are important components for successfully leveraging eye-in-hand human video demonstrations. However, the framework has limitations when the target object is very small, and it requires the collection of a robot play dataset to train the inverse model. Future work aims to automate play data collection.

The research introduces a policy that includes two additional feedforward layers with a latent dimensionality of 64. The policy is conditioned on a 1-dimensional grasp state variable. This variable is combined with a 50-dimensional latent embedding output from the second feedforward layer of the image encoder. The resulting 51-dimensional embedding is passed to the policy head, which outputs an action prediction that imitates the expert demonstrator’s action. Random shifts data augmentation is applied during the training of the BC policy.

The tasks for the research are divided into environment generalization tasks and task generalization tasks. The environment generalization tasks include reaching, cube grasping, plate clearing, and toy packing, all with varying conditions. The task generalization tasks include cube stacking, cube pick-and-place, plate clearing, and toy packing, also with varying conditions.

Data is collected by a human teleoperator controlling a Franka Emika Panda robot arm. The robot interacts with objects in the environment, executing a variety of behaviors. The teleoperator's actions and the robot's responses are logged and stored for inverse model training. The research collected four robot play datasets for the reaching and cube stacking, cube grasping and cube pick-and-place, plate clearing, and toy packing tasks.

In each experiment, a set of expert robot demonstrations and a set of expert human demonstrations are collected. The robot demonstrations are specific to each task, while the human demonstrations include a variety of tasks and conditions.

CycleGAN was used for human-to-robot image translations, which were successful in some cases but noisy in others, affecting final BC policy performance.

The inverse dynamics model was used to label human video demonstrations with actions for the toy packing task. The model made mistakes due to noise in the CycleGAN translations, causing the robot gripper to rotate excessively in some cases.

The accuracy of the inverse dynamics model can be checked quantitatively on a validation set and qualitatively by checking if the predicted actions for a human video are sensible.

The results showed that leveraging human demonstrations led to significantly greater environment generalization than using robot demonstrations alone. Removing image masking led to failure in certain environment configurations and removing grasp state led to repeated reattempts to grasp an object even after it had been grasped. These findings highlight the importance of both image masking and grasp state in the successful application of the proposed method.