Notes on GPT4RoI: Instruction Tuning Large Language Model on Region-of-Interest

This is a summary of an important research paper that provides a 16:1 time savings. It was crafted by humans working with several AI's. The goal is to save time and curate good ideas.

Link to paper: https://arxiv.org/abs/2307.03601

Paper published on: 2023-07-07

Paper's authors: Shilong Zhang, Peize Sun, Shoufa Chen, Min Xiao, Wenqi Shao, Wenwei Zhang, Kai Chen, Ping Luo

GPT3 API Cost: $0.03

GPT4 API Cost: $0.10

Total Cost To Write This: $0.13

Time Savings: 16:1

TLDR:

GPT4RoI is a model that can understand regions of interest in images.

It uses instruction tuning to understand and describe different regions in an image.

The model can understand single-region and multi-region instructions.

GPT4RoI is an end-to-end model, meaning it doesn't need any extra steps to work.

It can use any object detector to help it understand the image.

The model was trained on a dataset of image-text pairs.

It can be improved by adjusting the model architecture and getting more data.

The model has limitations and can fail in certain situations.

GPT4RoI can be used to build interactive AI systems and advanced image recognition systems.

It represents a big step forward in vision-language models.

DEEPER DIVE:

Understanding GPT4RoI: A Vision-Language Model for Region-level Image Understanding

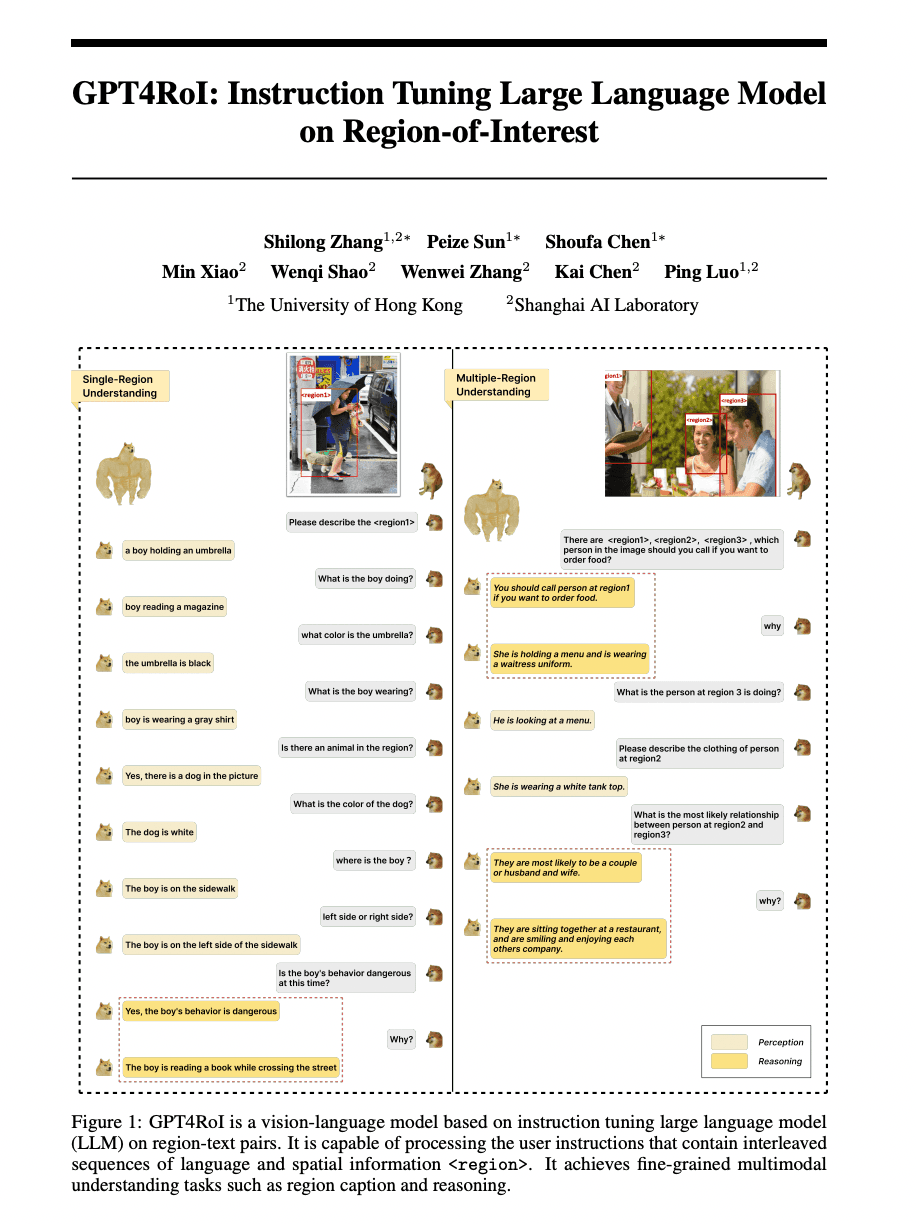

Let's start with the core idea: this research paper introduces a vision-language model dubbed GPT4RoI that is designed to understand regions of interest within an image. It does this by using instruction tuning on region-text pairs, which allows it to achieve fine-grained multimodal understanding tasks such as region captioning and reasoning.

The novelty here lies in the model's ability to reformulate bounding boxes as spatial instructions. This allows it to understand both single-region and multi-region spatial instructions, enabling detailed region captioning and complex region reasoning.

The Underlying Architecture of GPT4RoI

GPT4RoI is an end-to-end model, meaning it takes input, processes it, and provides output without needing any intermediate steps or external processes. This makes it efficient and versatile, as it supports region-level understanding and multi-round conversations.

The model leverages spatial instruction tuning on region-text pairs, where the bounding box and text description of each object are provided. It uses the spatial instruction in conjunction with language instruction to generate a response.

The model is trained on region-text datasets that are transformed from image-text datasets. This means that the model learns to understand regions of an image by associating them with textual descriptions.

Leveraging Object Detectors and Spatial Instructions

An interesting aspect of GPT4RoI is that it can use any off-the-shelf object detector as a spatial instruction provider to extract object attributes. This means it can leverage existing technology and doesn't require bespoke object detection algorithms to function.

The spatial instructions, combined with language instructions, are used to generate a response from the model. This dual-input approach allows for a more nuanced understanding of the image and text data the model is processing.

Training and Fine-tuning GPT4RoI

The model was trained on 8 GPUs with 80G of memory, using two training stages with different learning rates and batch sizes. It uses a cosine learning schedule and a warm-up iteration, which are common practices in machine learning to improve model performance and prevent overfitting.

To save memory during fine-tuning, Fully Sharded Data Parallel (FSDP) is enabled in PyTorch. The model is trained on the VCR dataset, which consists of multiple-choice questions from movie scenes, requiring common-sense reasoning. The dataset is converted into a two-round conversation format to align with the model's multi-round conversation capability.

Potential Improvements and Limitations

Like any model, GPT4RoI has room for improvement. The paper suggests that potential enhancements could include adjusting the model architecture, obtaining more region-text pair data, generating region-level instructions, and incorporating additional interaction modes.

However, the model also has limitations. It may fail in certain scenarios due to limited data and instructions, and issues such as instruction obfuscation and misidentification of fine-grained information have been identified as failure cases.

Future Applications and Implications

The GPT4RoI model brings a new interactive experience where users can interact with the model using both language and spatial instructions. This could potentially be used to build more interactive and intuitive AI systems, such as chatbots or virtual assistants, that can understand and respond to both text and image inputs.

Moreover, the model's ability to understand regions of interest within an image could be used to develop more advanced image recognition systems. For example, it could be used to build a system that can identify and describe specific objects within an image, or to create a tool that can automatically generate detailed captions for images.

In conclusion, GPT4RoI represents a significant step forward in the field of vision-language models. Its ability to understand and respond to both text and image inputs, combined with its detailed region-level understanding, opens up a wide range of potential applications and improvements. As with any model, there are limitations and potential pitfalls, but these can be addressed through further research and development.