Notes on In-context Autoencoder for Context Compression in a Large Language Model

This is a summary of an important research paper. It was made interactively by a human and several AI's. The goal is to curate good ideas and save time.

Link to paper: https://arxiv.org/abs/2307.06945

Paper published on: 2023-07-13

Paper's authors: Tao Ge, Jing Hu, Xun Wang, Si-Qing Chen, Furu Wei

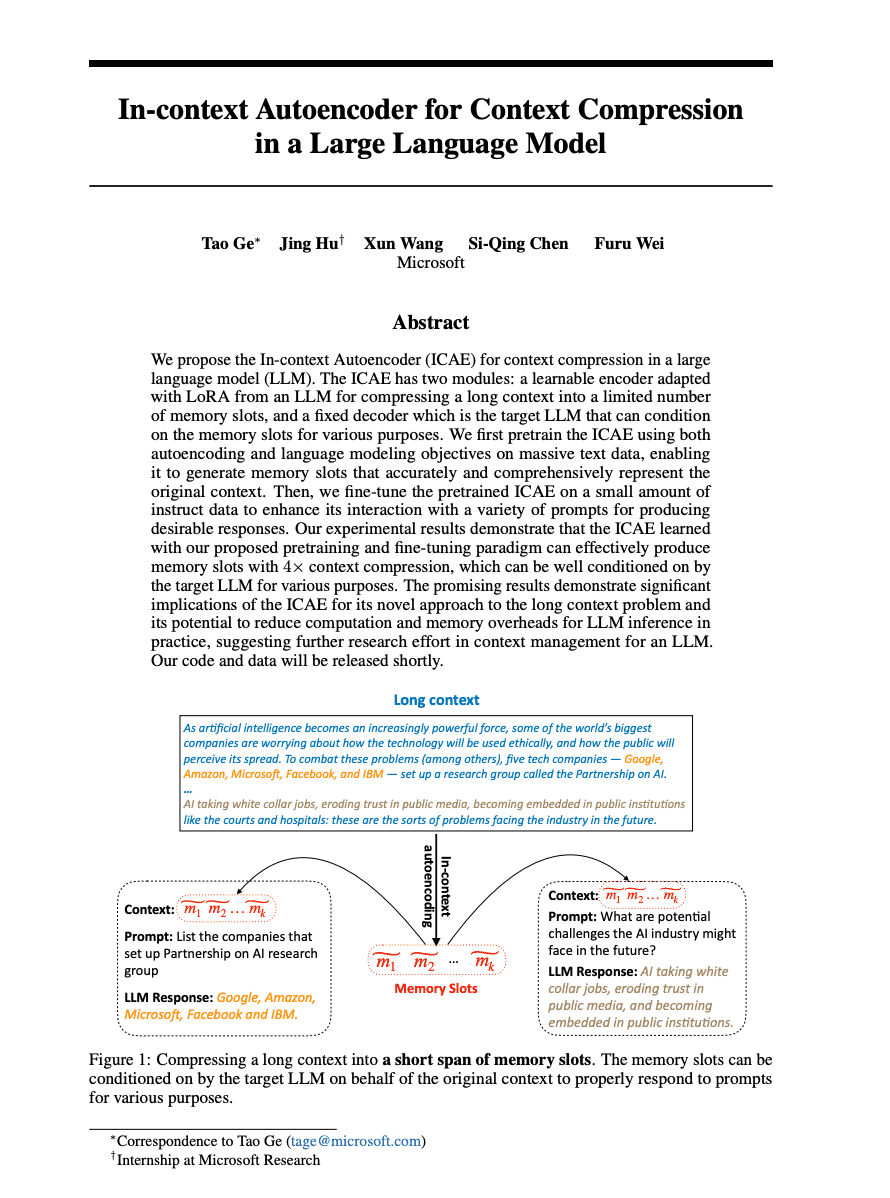

Imagine you're at a party with too many people to remember, and you're trying to keep track of everyone's names, interests, and stories. It's an overwhelming task, and you might find yourself wishing for a way to compress all that information into manageable, digestible chunks. This is the challenge that the In-Context Autoencoder (ICAE) addresses for large language models (LLM) - effectively reducing the long context into a limited number of "memory slots".

The novel aspect of the ICAE is its two key components: a learnable encoder and a fixed decoder. The encoder, using LoRA, works as a memory manager at the party, compressing the long context into memory slots. The decoder, on the other hand, is the LLM that uses these memory slots to recall the context for various purposes.

Pretraining the ICAE on massive text data using autoencoding and language modeling objectives improves its generalization and reduces overfitting. Further, it's fine-tuned on instruct data, enhancing its ability to interact with prompts. This is akin to giving our memory manager at the party a crash course on remembering names and faces, and then fine-tuning their skills with specific instructions on who to remember.

Now, let's delve into the specifics of how the ICAE operates. The encoder uses a LoRA-adapted LLM to add memory tokens to the context, producing memory slots. These memory slots are then used by the decoder to restore the original context. The autoencoding objective here is to restore the original input text from these memory slots.

The performance of ICAE, however, declines as the context length increases beyond 400 tokens. This is similar to our memory manager at the party struggling to remember details as the crowd size increases. Furthermore, the length of the memory slots, denoted by 'k', impacts the ICAE's ability to memorize longer samples. Lower 'k' values result in poorer performance, just like having fewer memory slots would make it harder for our memory manager to remember everyone at the party.

The ICAE also exhibits a selective emphasis or neglect of certain parts of the information during the memorization process, similar to how humans selectively remember information. It's evaluated using the PLC dataset, which consists of (context, prompt, response) samples. The PLC test set also helps to evaluate the ICAE's performance in following instructions.

The ICAE has implications for addressing the long context problem and reducing computation and memory overheads in LLM inference. The experimental results show that the ICAE can effectively produce memory slots with 4x context compression, and in following instructions, the LLaMa accessing the ICAE's memory slots outperforms other language models.

The paper also introduces the PROMPT-WITH-LONG-CONTEXT (PLC) dataset, which consists of text, prompt, and answer triplets. This dataset, constructed by sampling 20k texts from the Pile dataset and generating 15 prompts for each text using GPT-4, is used for evaluating the quality of the GPT-4 model. The prompts cover various aspects of the text, including topic, genre, structure, style, polarity, key information, and details. The evaluation prompt is formulated for the GPT-4 API, consisting of a task description and three specific examples. The task is to choose the better answer and provide reasons, evaluating the responses based on whether they follow the given instruction and are correct.

The research also compares the performance of memory slots and summaries in context compression. Memory slots significantly outperform summaries, with a win/lose ratio of 1.2. However, the performance is affected by the length of the memory slots, with higher compression ratios leading to lower performance. Pretraining the ICAE improves its performance compared to non-pretrained versions. The pretrained ICAE shows less hallucination and more accurate responses to prompts.

In conclusion, the ICAE is a promising approach to addressing the long context problem and reducing computation and memory overheads in LLMs. It offers a simple and scalable approach to generating memory slots, enhancing the model's capability to handle long contexts. However, the generalization capabilities of the approach are limited, suggesting further research in context/memory management for an LLM.