Notes on Retentive Network: A Successor to Transformer for Large Language Models

This is a summary of an important research paper that provides a 16:1 time savings. It was crafted by humans working with several AI's. The goal is to save time and curate good ideas.

Link to paper: https://arxiv.org/abs/2307.08621

Paper published on: 2023-07-19

Paper's authors: Yutao Sun, Li Dong, Shaohan Huang, Shuming Ma, Yuqing Xia, Jilong Xue, Jianyong Wang, Furu Wei

GPT3 API Cost: $0.03

GPT4 API Cost: $0.08

Total Cost To Write This: $0.10

Time Savings: 16:1

The ELI5 TLDR:

RetNet is a new architecture for large language models that can be trained quickly without sacrificing performance and is cost-effective. It uses a retention mechanism that supports parallel, recurrent, and chunkwise recurrent computation. It also uses gated multi-scale retention to capture complex patterns and dependencies in the data. RetNet performs as well as or better than Transformer models and is more efficient for larger models. It introduces new benchmarks for evaluating language models and has potential applications in summarization, translation, dialogue systems, and multimodal learning. It can also be deployed on edge devices like mobile phones. Overall, RetNet is a promising architecture for large language models.

The Deeper Dive:

Introducing RetNet: A Promising Architecture for Large Language Models

The crux of this recent AI research paper is the proposition of a new architecture for large language models, known as the Retentive Network or RetNet. The novel aspect of RetNet is its ability to achieve training parallelism, low-cost inference, and good performance, all at once. This is akin to solving the "impossible triangle" of machine learning, where these three factors usually have trade-offs.

Imagine a scenario where you have a large language model that needs to be trained quickly, without sacrificing performance, and you also want it to be cost-effective in terms of resources used during inference. This is where RetNet comes into play.

Understanding the RetNet Architecture

RetNet is designed with identical blocks stacked together, each containing a multi-scale retention (MSR) module and a feed-forward network (FFN) module. The MSR module is the key to RetNet's impressive performance.

The Retention Mechanism

The retention mechanism of RetNet supports three computation paradigms: parallel, recurrent, and chunkwise recurrent. This is a major departure from traditional models like Transformers, which primarily rely on parallel computation.

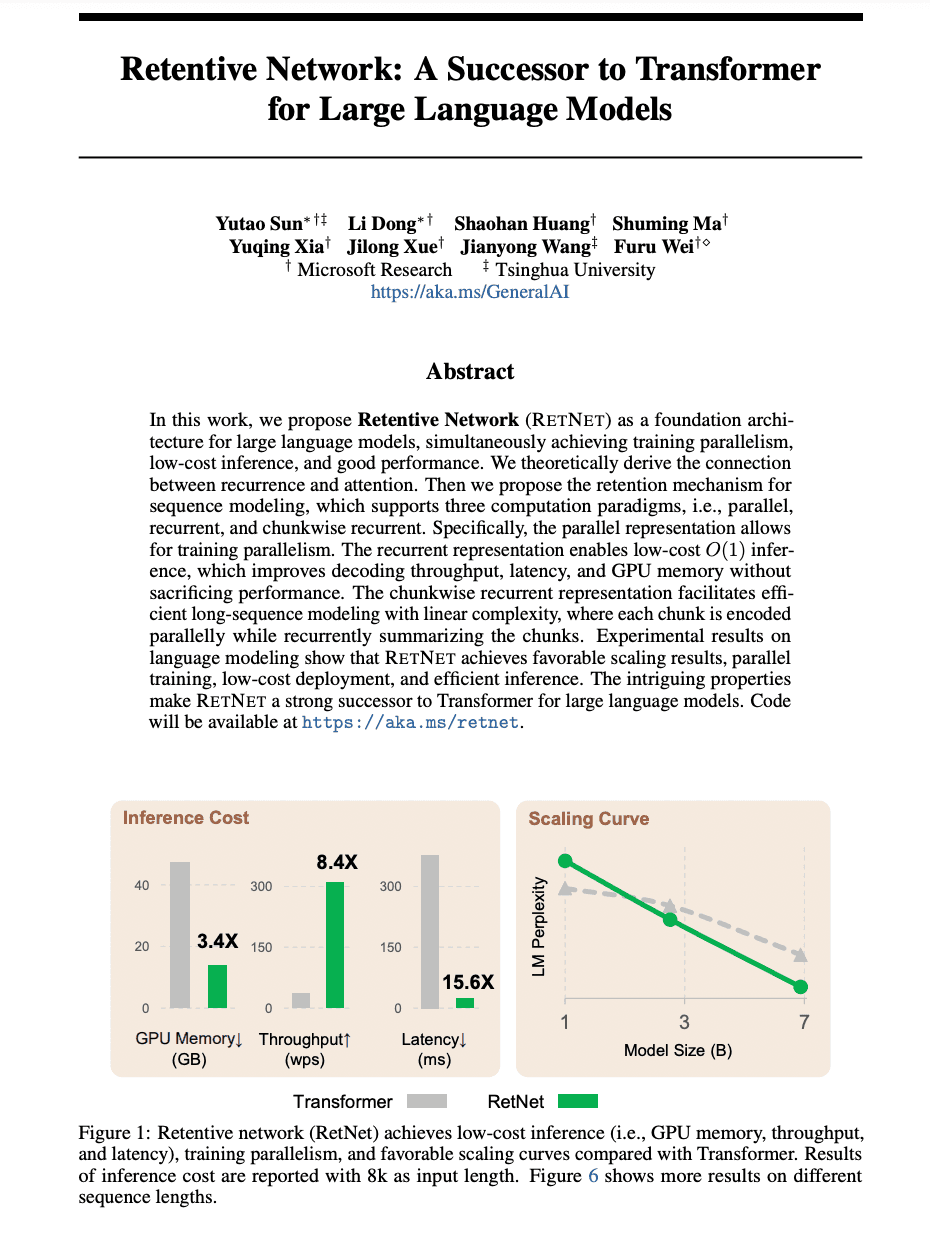

The parallel representation of the retention mechanism allows efficient training with GPUs, thus enabling training parallelism. The recurrent representation, on the other hand, is favorable for inference, improving decoding throughput, latency, and GPU memory usage. Lastly, the chunkwise recurrent representation facilitates efficient long-sequence modeling with linear complexity.

Gated Multi-scale Retention

RetNet uses gated multi-scale retention with multiple heads in each layer. This approach allows the model to retain information at different scales and to control the flow of information between layers. The use of gates and multiple heads enhances the model's ability to capture complex patterns and dependencies in the data.

Performance of RetNet

RetNet not only achieves comparable performance to Transformer models but also outperforms them when the model size is larger than 2B parameters. This makes RetNet a strong competitor to Transformer for large language models.

In terms of inference cost, which includes memory consumption, throughput, and latency, RetNet outperforms Transformer. It also shows favorable scaling behavior with increasing model size, making it a more efficient option for larger models.

Novel Benchmarks Proposed

The paper introduces new benchmarks for evaluating language models, including Hellaswag for sentence completion and Qmsum for query-based multi-domain meeting summarization. These benchmarks provide a more diverse set of tasks to test the models, pushing the boundaries of what language models can achieve.

Potential Applications of RetNet

The research indicates that RetNet can work efficiently with structured prompting by compressing long-term memory. This makes it a promising choice for applications that require understanding and generating long sequences of text, such as summarization, translation, and dialogue systems.

Furthermore, the researchers are interested in deploying RetNet models on various edge devices, such as mobile phones. This indicates the potential of RetNet in real-world applications where low-cost inference and good performance are crucial.

In addition, RetNet's architecture can be used to train multimodal large language models, which can understand and generate content across different types of data, such as text, images, and audio. This opens up a wide range of possibilities for creating more interactive and intelligent systems.

Wrapping Up

In summary, RetNet is a promising new architecture for large language models that achieves the difficult balance of training parallelism, good performance, and low inference cost. Its unique retention mechanism and gated multi-scale retention make it a strong successor to Transformer models, especially for larger models. The potential applications of RetNet are vast, from language understanding and generation tasks to deployment on edge devices and multimodal learning.