Notes on Scale-Aware Modulation Meet Transformer

This is a summary of an important research paper that provides a 24:1 time savings. It was crafted by humans working with several AI's. The goal is to save time and curate good ideas.

Link to paper: https://arxiv.org/abs/2307.08579

Paper published on: 2023-07-17

Paper's authors: Weifeng Lin, Ziheng Wu, Jiayu Chen, Jun Huang, Lianwen Jin

GPT3 API Cost: $0.05

GPT4 API Cost: $0.15

Total Cost To Write This: $0.20

Time Savings: 24:1

The ELI5 TLDR:

This article talks about a new type of computer program called the Scale-Aware Modulation Transformer (SMT) that can do different visual tasks really well. It combines two other types of programs called convolutional networks and vision Transformers. The SMT has two special parts called the Multi-Head Mixed Convolution (MHMC) module and the Scale-Aware Aggregation (SAA) module. The MHMC module helps the program see different sizes of things and the SAA module helps the program put all the information together. The SMT also has something called the Evolutionary Hybrid Network (EHN) that helps the program understand things better as it gets deeper. The SMT is really good at things like recognizing objects in pictures and dividing pictures into different parts. It is better than other programs and uses less computer power.

The Deeper Dive:

A New Vision Transformer: Scale-Aware Modulation Transformer (SMT)

This article discusses a revolutionary new vision Transformer known as the Scale-Aware Modulation Transformer (SMT). The SMT is a hybrid of convolutional networks and vision Transformers, uniquely designed to handle a variety of downstream tasks efficiently. The SMT introduces two novel designs: the Multi-Head Mixed Convolution (MHMC) module and the Scale-Aware Aggregation (SAA) module.

The MHMC module captures multi-scale features and expands the receptive field, while the SAA module enables information fusion across different heads. The SMT also introduces an Evolutionary Hybrid Network (EHN) that simulates the shift from capturing local to global dependencies as the network becomes deeper. This results in superior performance across a wide range of visual tasks, including image classification, object detection, and semantic segmentation.

Key Components of SMT: MHMC and SAA Modules

The Multi-Head Mixed Convolution (MHMC) module is a key component of the SMT. It partitions input channels into multiple heads and applies distinct depth-wise separable convolutions to each head. This allows the module to capture various spatial features across multiple scales.

The Scale-Aware Aggregation (SAA) module is another essential component of the SMT. It enhances information interaction across multiple heads in MHMC by shuffling and grouping features of different granularities produced by MHMC. It then performs cross-group information aggregation using point-wise convolution.

Evolutionary Hybrid Network (EHN)

The SMT introduces an Evolutionary Hybrid Network (EHN) that effectively models the transition from capturing local to global dependencies as the network depth increases. The EHN consists of four stages with downsampling rates of {4, 8, 16, 32}. The top two stages use Scale-Aware Modulation (SAM) and Multi-Head Self-Attention (MSA) blocks to capture local and global dependencies. The penultimate stage sequentially stacks one SAM block and one MSA block to model the transition from local to global dependencies. The last stage solely uses MSA blocks to capture long-range dependencies.

Performance of SMT

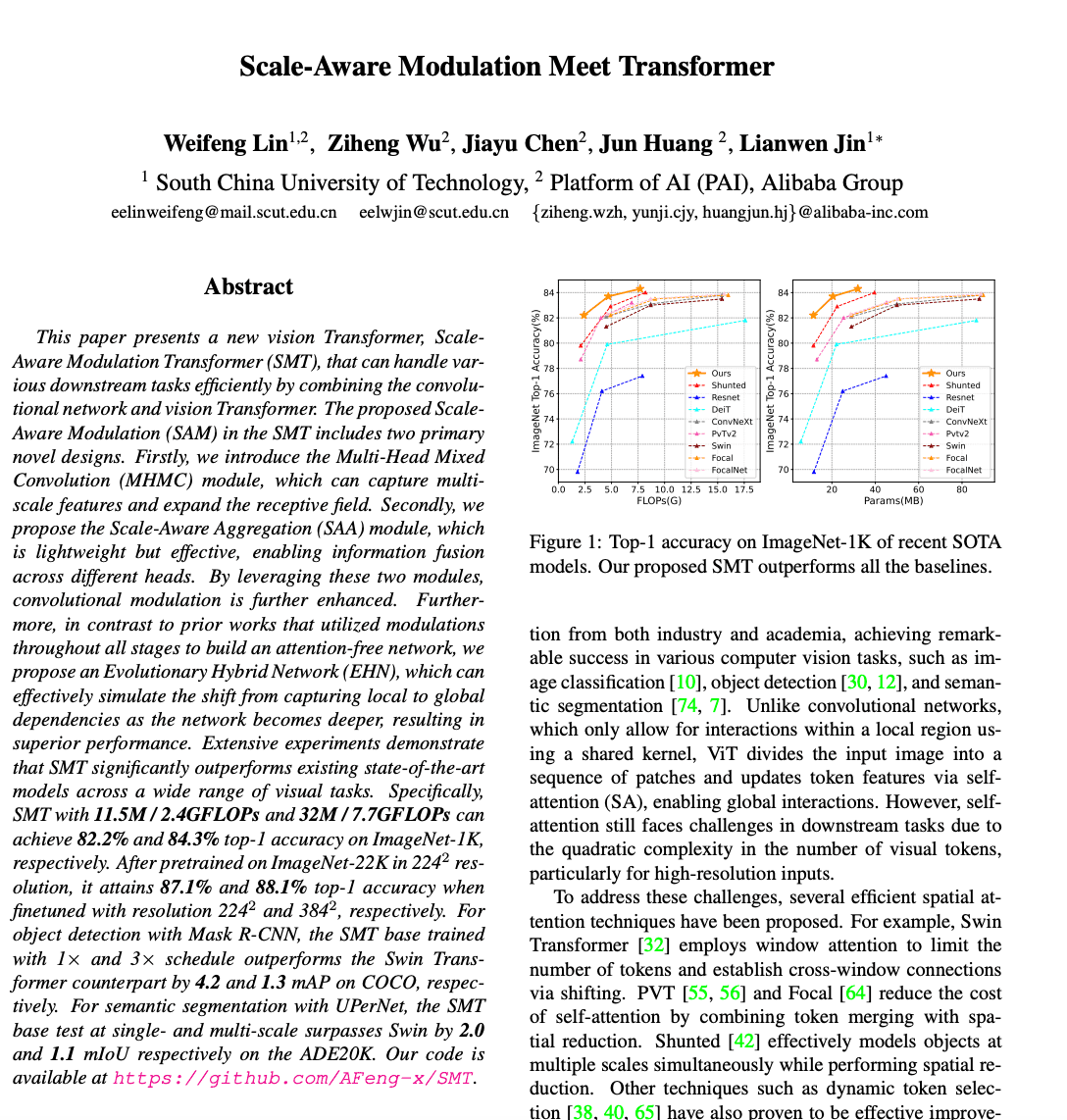

The Scale-Aware Modulation Transformer (SMT) significantly outperforms existing state-of-the-art models across various visual tasks. For instance, SMT achieves top-1 accuracy of 82.2% and 84.3% on ImageNet-1K with different model sizes. SMT also outperforms other SOTA models on COCO and ADE20K datasets for object detection and semantic segmentation tasks. Importantly, SMT requires fewer parameters and incurs lower computational costs compared to other SOTA models.

Hybrid Stacking Strategies

The SMT proposes two hybrid stacking strategies for the penultimate stage: (i) sequentially stacking one SAM block and one MSA block, and (ii) using SAM blocks for the first half and MSA blocks for the second half. These strategies effectively simulate the transition from local to global dependency capture, resulting in competitive performance on ImageNet-1K image classification, MS COCO object detection, and ADE20K semantic segmentation tasks.

Ablation Study

An ablation study conducted on SMT investigates the individual contributions of each component. The multi-head mixed convolution module improves the model's ability to capture multi-scale spatial features and expands its receptive field, resulting in a 0.8% gain in accuracy. The scale-aware aggregation module enables effective aggregation of the multi-scale features captured by the multi-head mixed convolution module, leading to a 1.6% increase in performance. The evolutionary hybrid network stacking strategy in the penultimate stage improves the modeling of the transition from local to global dependencies and results in a significant gain of 2.2% in performance.

Concluding Remarks

In conclusion, the Scale-Aware Modulation Transformer (SMT) presents a new and efficient way to handle various downstream tasks in visual processing. Its unique design, which includes the Multi-Head Mixed Convolution (MHMC) module and the Scale-Aware Aggregation (SAA) module, along with the Evolutionary Hybrid Network (EHN), allows for superior performance across a wide range of visual tasks. The SMT is a promising new generic backbone for efficient visual modeling, achieving comparable or better performance than well-designed ConvNets and vision Transformers, with fewer parameters and FLOPs.