Notes on Toward Interactive Dictation

This is a summary of an important research paper that provides a 23:1 time savings. It was crafted by humans working with several AI's. The goal is to save time and curate good ideas.

Link to paper: https://arxiv.org/abs/2307.04008

Paper published on: 2023-07-08

Paper's authors: Belinda Z. Li, Jason Eisner, Adam Pauls, Sam Thomson

GPT3 API Cost: $0.04

GPT4 API Cost: $0.11

Total Cost To Write This: $0.15

Time Savings: 23:1

The TLDR:

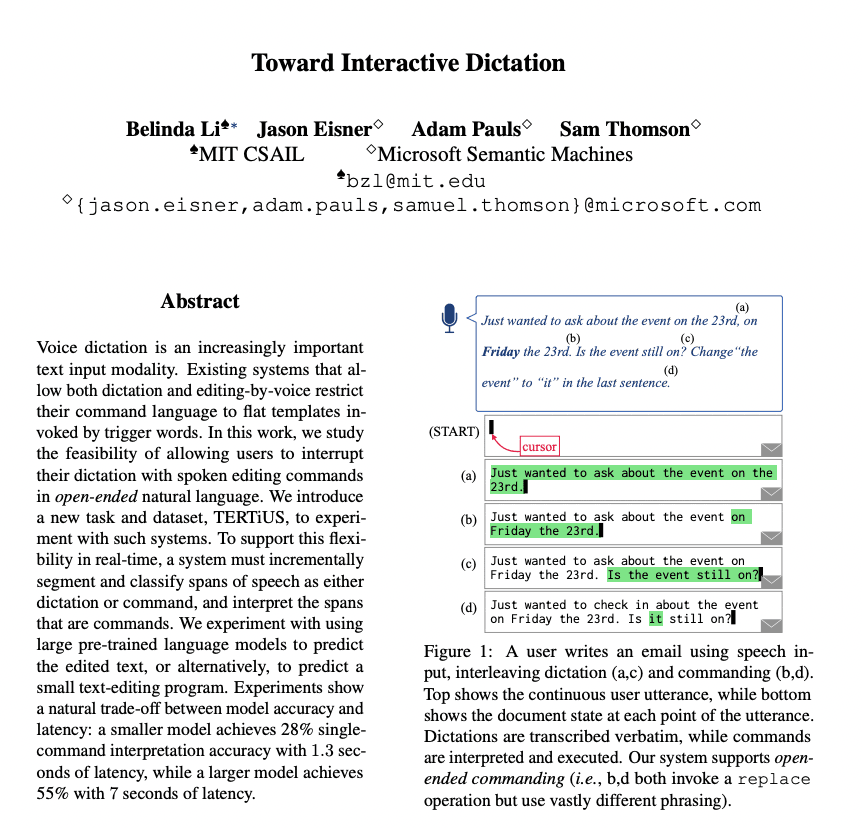

A new system allows users to dictate and edit text using spoken commands in natural language.

The system has different modules that transcribe speech, segment it into dictation and command segments, normalize the segments, and interpret them to update the document.

A dataset called TERTiUS was created to train and evaluate the system.

The segmentation model labels each segment as dictation or command, but struggles with errors and similar commands.

The normalization and interpretation models predict the normalized utterance and document state or update program for each command segment.

The models perform moderately well but have room for improvement.

GPT3 outperforms T5 in ASR repair and interpretation.

Runtimes for each component vary, with ASR repair and interpretation being the main bottlenecks.

The research suggests future work on expanding the dataset and improving the system's accuracy and efficiency.

The system could have implications for virtual assistants, accessibility tools, and collaborative writing or coding assistants.

The Deeper Dive:

Summary: Interactive Dictation System with Natural Language Commands

The research introduces a new task and dataset, TERTiUS, for the exploration of interactive dictation systems. It presents a system that allows users to dictate and edit text using spoken commands in natural language. The system segments and classifies spans of speech as either dictation or command, and then interprets the commands using large pre-trained language models. The research also introduces a novel data collection interface for gathering the dataset, which will be publicly released. The system experiments with two strategies and two choices of pre-trained language models, demonstrating a trade-off between model accuracy and latency. This research is different from previous work on speech repair and ASR error correction, and introduces a new mode of editing with large language models.

The Interactive Dictation System

The system developed in this research is composed of several modules: ASR (Automatic Speech Recognition), Segmentation, Normalization, and Interpretation. The ASR module transcribes the user's speech into text. The Segmentation module divides the transcribed text into dictation and command segments. The Normalization module transforms the segments into clean text. The Interpretation module predicts the document state based on the segments and updates the document accordingly. The system also supports change propagation to update the modules' outputs when there are changes in the input.

TERTiUS Dataset

The TERTiUS dataset was created for training and evaluation of the system. This dataset was collected using a data collection framework involving human demonstrators who provide gold segmentations, normalizations, and document state updates. This addresses the lack of public datasets for interactive dictation tasks.

Segmentation

The segmentation model partitions the transcript into dictation and command segments. It uses BIOES tagging, a common method in named entity recognition tasks, to label each segment. While the model performs decently on the TERTiUS dataset, it struggles with errors from the base ASR system and commands that are similar to dictation.

Normalization and Interpretation

The normalization model predicts the normalized utterance for each command segment. The interpretation model predicts either the document state or an update program for each command segment. These models are fine-tuned T5-base models or prompted GPT3 models, both of which are large pre-trained language models. While the models perform moderately well on the TERTiUS dataset, there is room for improvement. The runtime for the models varies, with the GPT3 models taking longer due to their larger size.

Evaluation and Results

The research evaluates segmentation, ASR repair, and interpretation components using accuracy metrics (F1, EM) and runtime. GPT3 outperforms T5 in ASR repair and interpretation, and both models are better at directly generating states. Runtimes for each component are reported, with ASR repair and interpretation being the main bottlenecks. Generating programs instead of states achieves faster runtimes but lower accuracy.

Conclusion and Future Work

The research introduces a new task for flexible invocation of commands through natural language and a new dataset, TERTiUS, for this task. It explores trade-offs between model accuracy and efficiency and aims to increase accessibility for those who cannot use typing inputs. However, the dataset is limited in size and does not support reliable dialogue-level evaluation metrics. The trained system is not production-ready and user studies have not been conducted. Future work is suggested on expanding the diversity of languages, dialects, and accents covered.

Implications and Applications

This research could have significant implications for the development of virtual assistants, accessibility tools, and collaborative writing or coding assistants. By enabling users to interrupt dictation with spoken editing commands in natural language, the system could make these tools more intuitive and efficient, particularly for users who are unable to use traditional typing inputs. However, the system's potential is currently limited by its reliance on large pre-trained language models, which can be slow and resource-intensive, and its struggles with ASR errors and command segmentation. Improvements in these areas could greatly enhance the system's usability and effectiveness.